Caching Strategies

Read Strategies

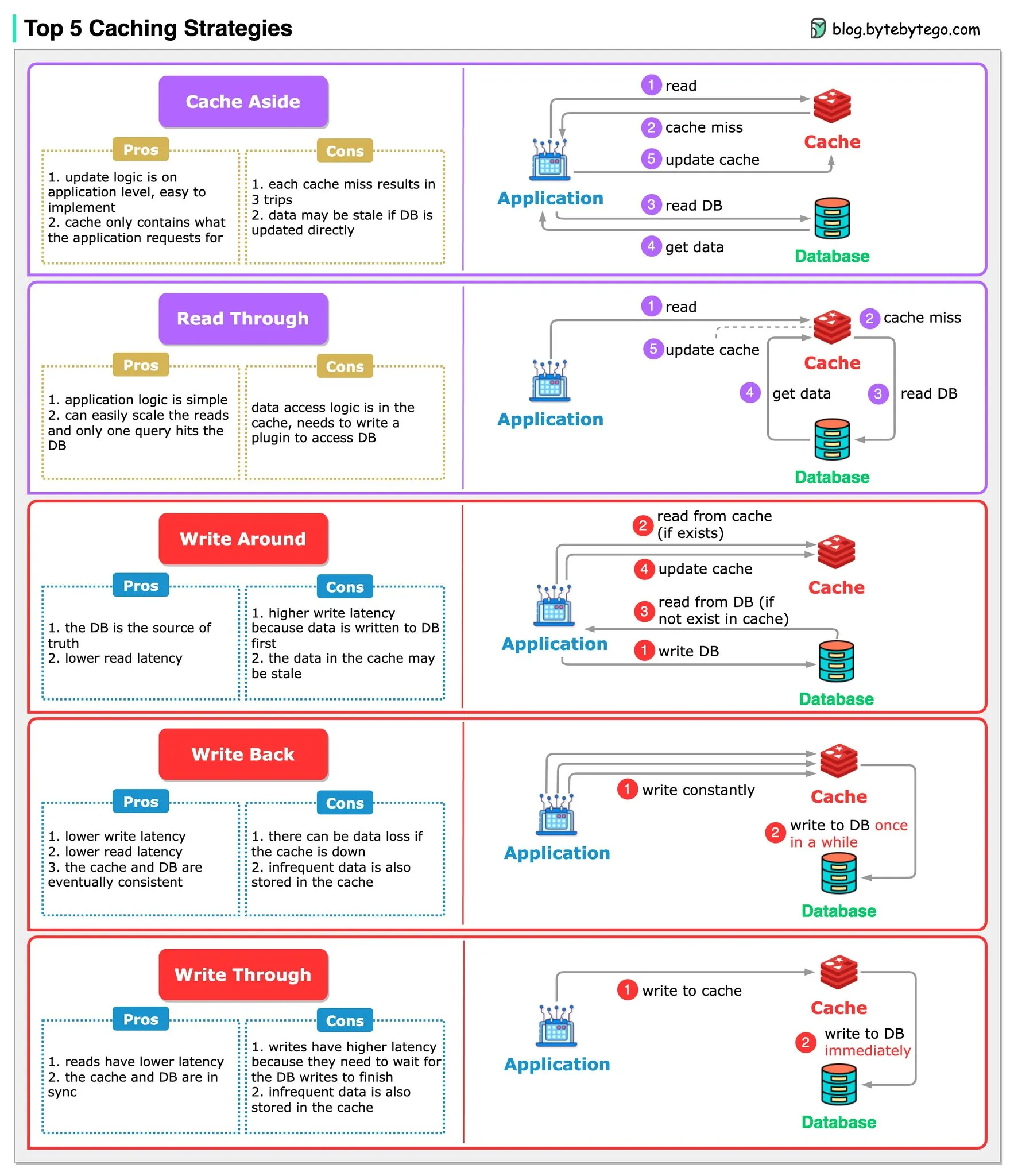

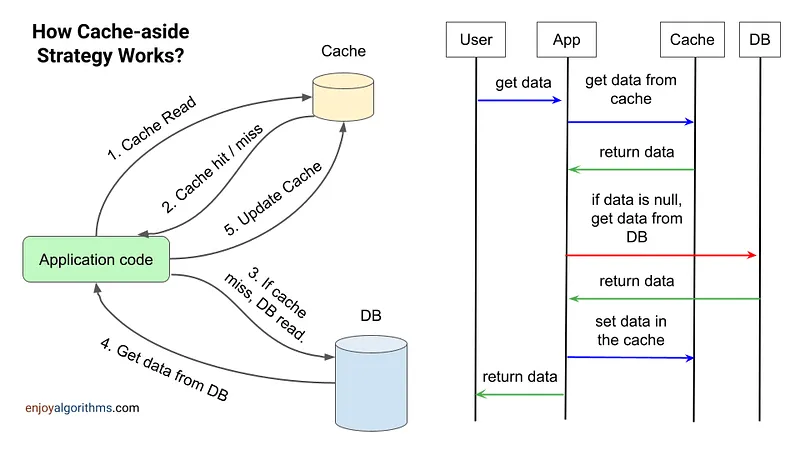

Section titled “Read Strategies”Cache-Aside (Lazy Loading) 📖

Section titled “Cache-Aside (Lazy Loading) 📖”- How it works: Tries cache first, then fetches from DB on cache miss.

- Usage: When cache misses are rare or the latency of a cache miss + DB read is acceptable.

- Good when misses are rare or reads tolerate origin latency.

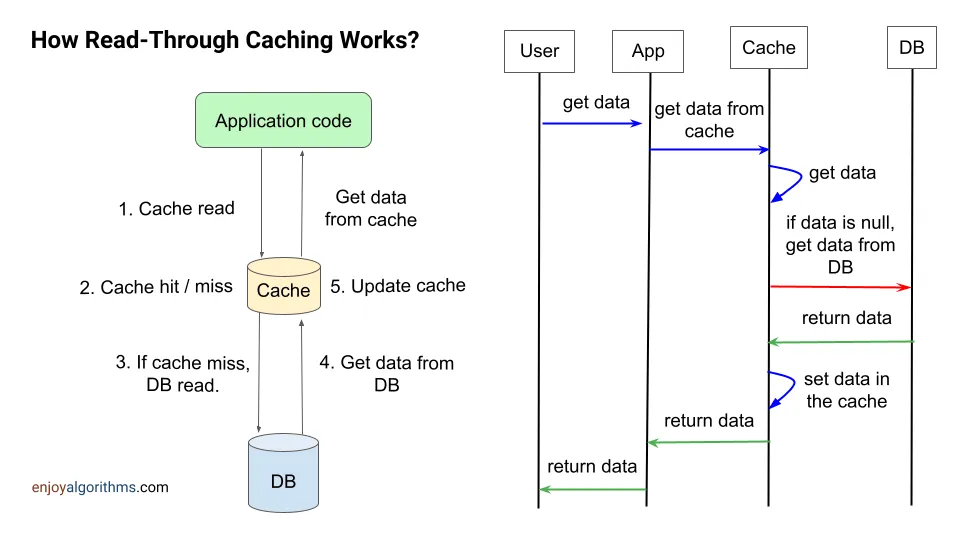

📚 Read Through

Section titled “📚 Read Through”- How it works: Cache handles DB reads, transparently fetching missing data on cache miss.

- Usage: Abstracts DB logic from app code. Keeps cache consistently populated by handling misses automatically.

Write Strategies

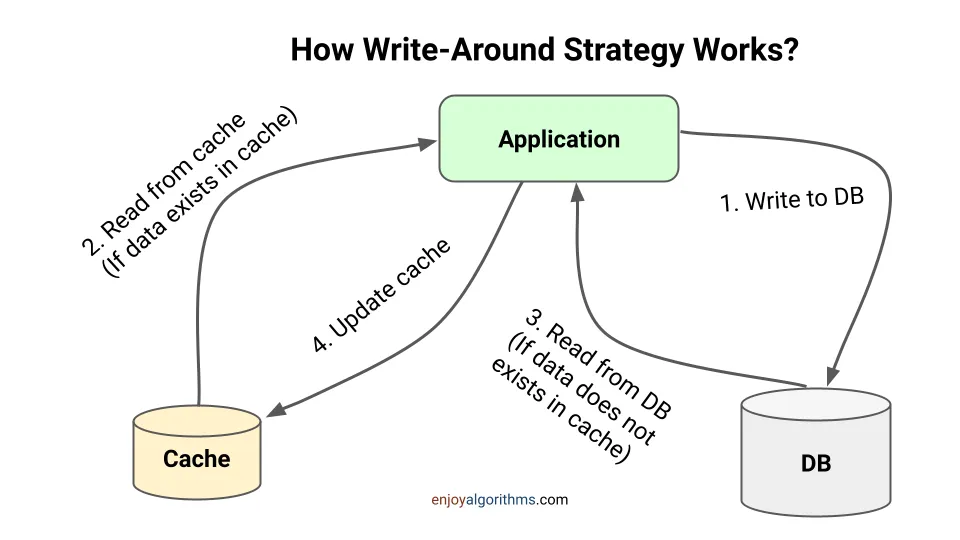

Section titled “Write Strategies”✍️ Write Around

Section titled “✍️ Write Around”- How it works: Writes bypass the cache and go directly to the DB.

- Usage: When written data won’t immediately be read back from cache.

- This can reduce the cache being flooded with write operations that will not subsequently be re-read, but has the disadvantage that a read request for recently written data will create a “cache miss” and must be read from slower back-end storage and experience higher latency.

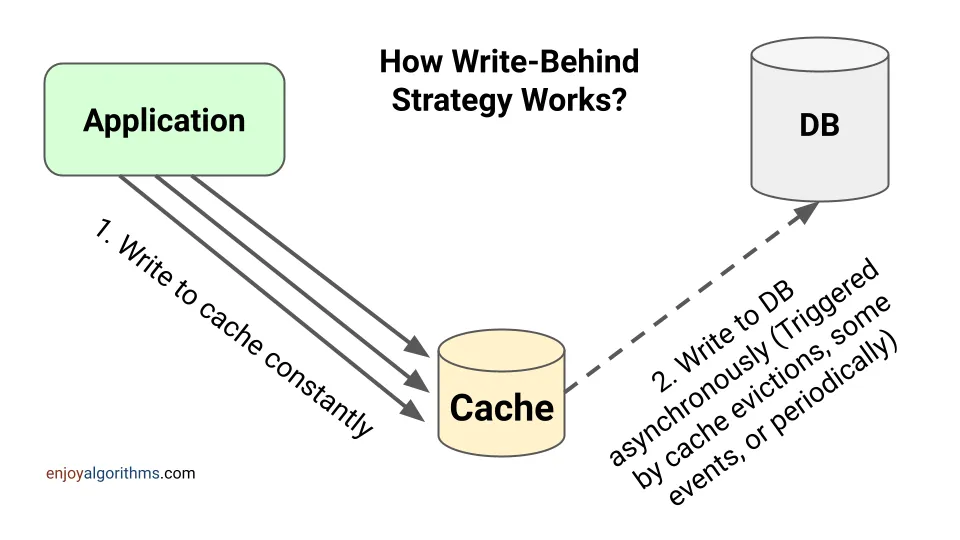

🔄 Write Back (Delayed Write)

Section titled “🔄 Write Back (Delayed Write)”- How it works: Writes to cache first, async write to DB later.

- Usage: In write-heavy environments where slight data loss is tolerable.

- This results in low latency and high throughput for write-intensive applications, however, this speed comes with the risk of data loss in case of a crash or other adverse event because the only copy of the written data is in the cache.

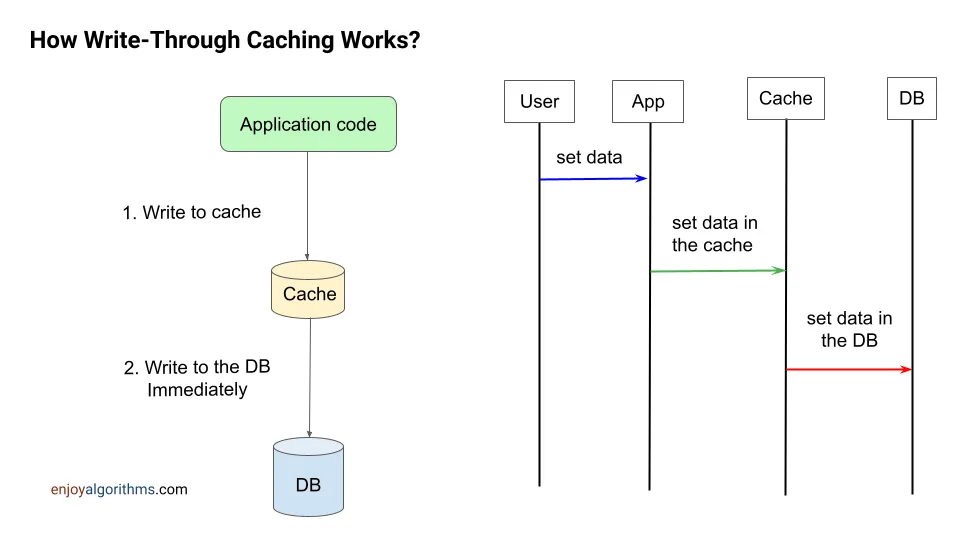

💾 Write Through

Section titled “💾 Write Through”- How it works: Writes the data in cache, then immediately or synchronously updated in DB’s

- Usage: When data consistency is critical.

- Under this scheme data is written into the cache and the corresponding database at the same time.

- The cached data allows for fast retrieval, and since the same data gets written in the permanent storage, we will have complete data consistency between cache and storage.

- Also, this scheme ensures that nothing will get lost in case of a crash, power failure, or other system disruptions.

- Although write through minimizes the risk of data loss, since every write operation must be done twice before returning success to the client, this scheme has the disadvantage of higher latency for write operations.

Real-Life Usage:

Section titled “Real-Life Usage:”-

Cache Aside + Write Through 🌐

This ensures consistent cache/DB sync while allowing fine-grained cache population control during reads. Immediate database writes might strain the DB. -

Read Through + Write Back 📈

This abstracts the DB and handles bursting write traffic well by delaying sync. However, it risks larger data loss if the cache goes down before syncing the buffered writes to the database.

E-commerce Website: Cache Aside + Write Through

Section titled “E-commerce Website: Cache Aside + Write Through”Scenario: Fast content delivery is crucial for customer satisfaction on an e-commerce platform. It’s also vital to keep transactions like orders and payments consistent.

-

Cache Aside for Product Listings: Speeds up load times for frequently viewed products. When product details in the database are updated, the cache is invalidated to ensure users get the most recent information.

-

Write Through for Transactions: Ensures that every transaction made by a customer is immediately updated in both the cache and the database, keeping critical data consistent and up-to-date.

Social Media App: Read Through + Write Back

Section titled “Social Media App: Read Through + Write Back”Scenario: A social media app with high volumes of content posts and interactions faces periods of intense traffic.

-

Read Through for Feeds: Manages user feeds by caching accessed data for quick retrieval. Missing data triggers an automatic database fetch and cache update, ensuring feeds load quickly.

-

Write Back for User Posts: Handles high volumes of user-generated content by caching posts and comments first, then asynchronously writing them to the database. This method absorbs peak loads efficiently, with strategies in place to minimize data loss.